Why You Should Join: Inside Edition

Suggestions from Bogomil Balkansky (Sequoia), Shardul Shah (Index), Alex Kolicich (8VC), Ali Partovi and Suzanne Xie (Neo), and Arash Afrakhteh (Pear).

TL;DR: if you’re open to new roles, Chkk, Footprint, Ollama, Cursor, and Orby AI are all incredibly promising startups and worth checking out.

If you’re interested in roles with any of the companies we’ve covered (or the unreleased ones we have in our pipeline 👀), please fill out this form to join our talent network.

Welcome to “Why You Should Join,” a monthly newsletter highlighting early-stage startups on track to becoming generational companies. On the first Monday of each month, we cut through the noise by recommending one startup based on thorough research and inside information we’ve received from venture firms we work with. We go deeper than any other source to help ambitious new grads, FAANG veterans, and experienced operators find the right company to join. Sound interesting? Join the family and subscribe here:

Why You Should Join: Inside Edition

January 2024

As you know, the goal of this newsletter is to highlight promising early-stage companies to join, and to do so in a way that’s thorough, unbiased, and (occasionally) entertaining.

We do this because startups are risky business, and we think people deserve a quality resource to help figure out which ones are actually worth joining — one that’s less shallow, biased, and hype-driven than most of what’s out there.

Our philosophy in doing this has been to been to offer the opposite of one of those “Top Startups” lists — to us, it’s important to understand why a company might succeed before joining it, and we felt most resources recommending companies weren’t going deep enough to be helpful with that. By cherrypicking and deeply analyzing just one company each month (all from the portfolios of great investors), we hoped to provide a better starting point for people exploring their next roles.

In our quest to achieve this, we’ve naturally ended up talking with lots of VCs.

We do this to gather information on which startups are doing well, rumors on which startups aren’t doing so well, and (most importantly) suggestions for overpriced coffee shops.

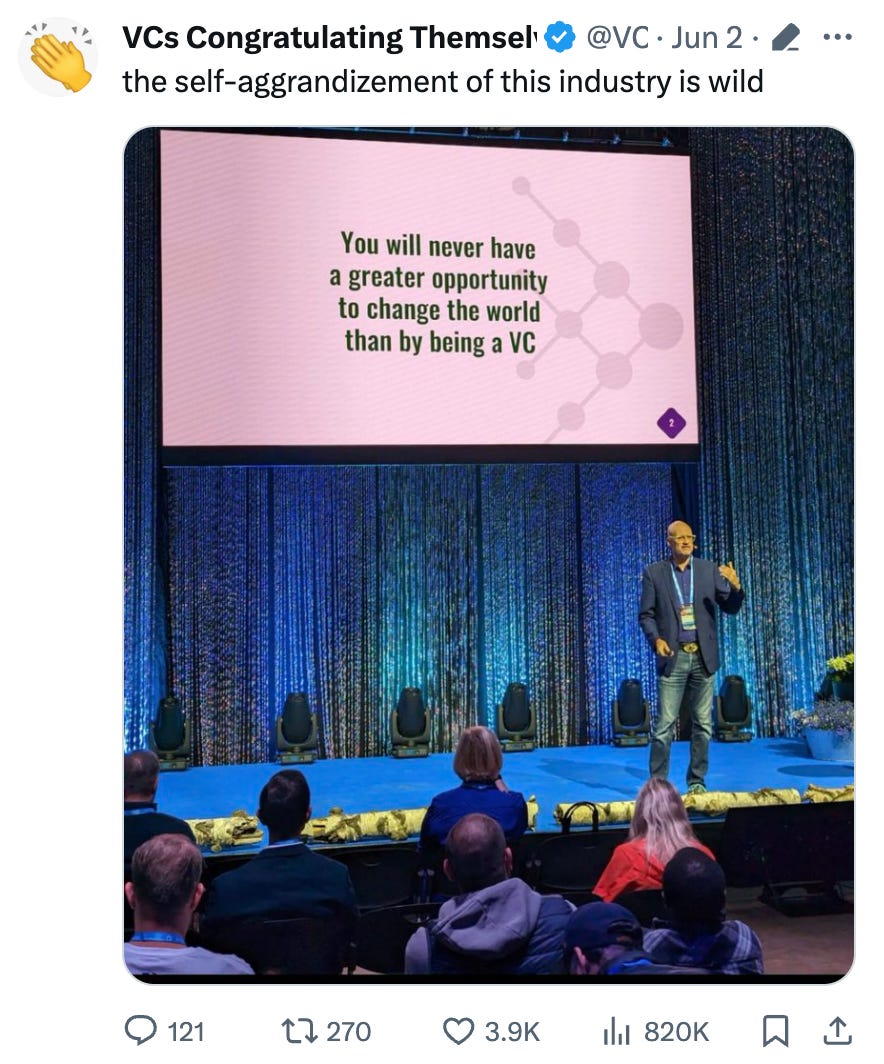

Now, we know investors get a bad rap on Twitter

…and it’s true, we’ve left a few meetings scratching our heads.

But most of the investors we’ve met have actually really impressed us with their thoughtfulness, curiosity, and insight.

We recently began thinking it’d be worth sharing some of their perspectives more directly — many of you have reached out for additional company suggestions, so we thought something here might be a good way to help out (especially since it’s hard for us to write more than one piece ourselves each month).

With that in mind, we invited several investors we respected deeply to share their perspectives on some companies we were both excited about.

This new series is the result.

Our first edition features five companies in total, each backed by a different firm and each at an early stage. At the bottom of each investor-contributed piece, we’ve added a few editor’s notes on the company and why we admire the investor who’s recommending it.

Not everyone has a VC they can DM for advice when they’re thinking about their next role.

We hope this will be the next best thing :)

Why You Should Join Chkk

Preventing Kubernetes Problems Before They Start

Kubernetes is designed for flexibility. That’s part of what has made it the de facto standard for cloud-native applications, with a recent Cloud Native Computing Foundation survey finding 96% of organizations are using or evaluating the platform. But that flexibility also comes with a cost: complexity. To take full advantage of K8s’ vibrant ecosystem of add-ons, teams must navigate multiple configs and track intricate dependencies across a maze of open-source and commercial products—and one seemingly minor mistake can cause a major chain reaction.

For organizations with business-critical apps on Kubernetes, availability and uptime are paramount, but most of the tools for uptime and availability only alert you about problems after they have occurred. Platform Engineering, SRE, and DevOps teams are forced to wait and see, risking credibility and wasting time on reactive fire-fighting after even routine upgrades.

But now there is a better solution: Chkk, a platform for proactively identifying and addressing infrastructure errors, disruptions and failures before they ever lead to downtime. Just as an X-ray scans the human body to spot health issues before they land you in the ER, Chkk finds and diagnoses availability risks in Kubernetes environments.

Co-founders Awais Nemat, Fawad Khaliq and Ali Khayam previously worked together on the AWS team that built Elastic Kubernetes Service (EKS), where they had a key insight: if one enterprise experienced a disruption, the root cause was likely to affect other enterprises, too. Rather than all of those organizations responding reactively, one by one, why not share their knowledge and prevent problems in the first place?

At the heart of this Collective Learning Technology is Chkk’s Availability Risk Signature Database, which tracks hundreds of Kubernetes issues sourced from publicly available information such as incident reports, release notes and forum discussions—along with data from Chkk’s own users—to populate a Knowledge Graph of risks. It is very similar to how the security industry tracks CVEs. The platform then streams that information to customers and contextualizes it against their own infrastructure, detecting and remediating issues before they cause disruptions. In other words, teams get to learn from each others’ mistakes—so they don’t have to repeat them.

Chkk counts Alef Edge, Fairtiq, Nexoya, Phi Labs, Yoti and many others among their happy customers, and they’ve been getting impressive feedback since the launch of their Kubernetes Availability Platform in late October. As the new year begins, many more organizations will no doubt be on the hunt for enhanced Kubernetes resiliency and an insurance policy against outages in 2024—and Chkk may be just the solution they’re looking for.

— Bogomil Balkansky, Partner at Sequoia

Chkk is hiring talented software engineers for their North America team - if you’re interested (and especially if you have experience with Go, Kubernetes, and AWS/GCP/Azure), please reach out to their team here. We also recommend checking out the rest of Bogomil’s portfolio at Sequoia (i.e. Mutiny, Temporal, and Wiz). Bogomil was previously VP Cloud Recruiting Solutions at Google and Senior VP of Cloud Infrastructure Products at VMware. He also runs a most excellent cooking blog.

Why You Should Join Footprint

Solving for Fraud and Friction

The conventional wisdom has been businesses face a tradeoff when they onboard a customer: onboard more users while accepting more fraud, or add friction to processes to prevent bad actors at the cost of good actors. Both options are bad. Businesses lose billions of dollars a year due to fraud, while missing out on customers due to the friction put in place to avoid the fraud. Footprint is the first company I met that had a plan to solve for both fraud and friction.

They do this with a fundamentally different philosophical approach to onboarding. It starts with perhaps a paradox: they don’t believe you solve fraud by solely looking for bad actors. This is especially true in an age of Generative AI giving fraudsters even more tools to generate an infinite amount of real-looking identities. This is because the Footprint team knows we will never find every bad actor. Instead, they are creating a closed-loop ecosystem that begins to firmly place proven good actors in their own bucket.

To do this, Footprint has built a compound platform. They built fully integrated KYC, Security, and Auth suites that could beat industry incumbents standalone, and together are magical. Companies have trusted their vaults with millions of PII records, their flows to onboard thousands of people a day, and their auth to prevent ATOs and phishing. Their product suite sits on top of novel technology they are pioneers in bringing to market. Footprint leverages App Clips and Passkeys to stop duplicate fraud in real-time and cryptographically verify the same person who created an account is the same person logging back onto a platform. Meanwhile, they are one of a handful of companies built on AWS Nitro Enclaves to securely vault the data.

The result is a flawless developer experience. Five lines of code to integrated a hosted onboarding flow which will collect PII, verify it, vault it, and re-authenticate the subject. Traditionally these were point solutions that did not work together. Footprint has realized the 1+1=3 nature across these tools and the result is the future of identity—one where it is portable. They realized if you solve security at onboarding you can solve friction going forward, and once you solve friction you can solve fraud.

Footprint is a deeply technical team with deep expertise in the problem area. Alex, the CTO, studied cryptography at MIT before starting a mobile authentication company that would be acquired by Akamai. Their fraud and risk team includes leaders from Stripe and Sardine, while their frontend and design helped scale early products at companies such as Personio, Kyte, and Observe.

Footprint counts a variety of delighted customers. These range from investment platforms with over a million users like Bloom, to leading car rental marketplaces like Flexcar, to tenant screening platforms servicing hundreds of thousands of units such as Findigs.

— Shardul Shah, Partner at Index

Footprint is hiring talented frontend and backend engineers - if you’re interested, please reach out to their team here. We also recommend checking out the rest of Shardul’s portfolio at Index (i.e. Twelve Labs, Expel, and Wiz). He has a remarkable track record and is an expert on all things security and cloud infrastructure.

Why You Should Join Ollama

Run Foundation Models on Your Machine

In the world of software development, it’s critical to have an efficient and fluid local development workflow. This axiom is reflected in the world of dev tooling, where the most exceptional projects & products often are the ones that introduce the greatest developer delight. When developers are bottlenecked by package management, version conflicts, merge & branching issues, and have to frequently leave their IDE and CLI, all they really want to do is close their laptop, smash a keyboard, and/or unplug a monitor. However when DevEx is optimized, the beatings no longer need to continue until morale improves.

As developers want to incorporate LLMs into their projects and applications, they’ve now incurred a new set of headaches. The majority of model usage is vendor-hosted, and the developer experience is tied to the cloud – devs in the experimentation phase now need to deal with latency overheads, rate limiting by model providers, and a whole host of information security concerns (let’s maybe not send personal emails over the wire). However, the advent of open-source models with strong reasoning capabilities and near-performance-parity has paved the way for new experiences with local LLMs, both in experimentation and in production.

Unfortunately, this pain also extends itself beyond the development cycle. For application programmers that would like to package their products to run locally (e.g. a Rewind) they would have to build their own solution to handle model downloading & config management, execution on CPU / GPU / heterogeneous underlying hardware, and performance optimization.

Enter Ollama, the best way to get started with local LLMs. They make it seamless for developers to run models like LLaMa 2, Mistral 7B, and even multi-modal models like LLaVA locally. It’s available today for macOS and Linux (with GPU-based acceleration), with Windows compatibility on the horizon.

Under the hood, Ollama leverages llama.cpp to run quantized models that can fit in memory and a distribution framework similar to Docker to enable downloading & storing models. They support importing models from formats like GGUF/GGML and offer the flexibility to customize models with the beautiful abstraction of a Modelfile (akin to a Dockerfile) – enabling users to configure prompts, base models, and in the future potentially fine-tunes / LoRAs. In creating a Modelfile, Ollama users can now distribute metadata & data along with models in a self-contained way.

Additionally, Ollama has a wealth of integrations, including terminal clients, UI wrappers (e.g. for chatbots), and libraries like Langchain to enable local RAG. They also have a handful of community-contributed plugins that allow for the seamless incorporation of Ollama models into everyday software applications like Discord, Telegram, and Obsidian.

The Ollama team, led by Jeffrey Morgan and Michael Chiang – the former architects of Docker Desktop, brings unparalleled expertise in local development. They ship fast, and it shows with their ever-growing library of available models. Ollama has polish & deliberate abstractions that fundamentally help accelerate the software development lifecycle in the era of LLMs. If you’d like to join the 23K+ people who’ve starred the repo & downloaded Ollama over 1.3 million times, check it out for yourself here.

— Alex Kolicich, Founding Partner at 8VC

If you’re a talented software, systems, or ML engineer interested in learning more about Ollama, check out their GitHub and Discord communities, or reach out to jeff@ollama.ai / michael@ollama.ai. We also recommend checking out the rest of Alex’s portfolio at 8VC. Prior to being a founding partner at the firm, Alex was an engineer and early-product advisor at Clarium, Palantir, and Google.

Why You Should Join Cursor

The AI-powered Code Editor

An incredible team, a large market, ambitious vision, and sky-rocketing traction are why you should join Cursor.

Cursor’s founders are a dream team of technical leaders from MIT who’ve won IMO/IOI medals, led one of the first LLMs deployments at Google, and created two of the world’s most popular programming games. We knew them well before funding them: CEO Michael Truell and cofounder Aman Sanger were among the most talented and entrepreneurial Neo Scholars. We’d have backed either of them in a heartbeat, let alone together. They were joined by two of their smartest friends, Arvid and Sualeh, making one of the most potent young startup teams anywhere.

Their vision and market are extremely ambitious: to automate all software engineering. This is an opportunity that doesn’t just cap out at a few billion dollars and could lead to a generational company. The vision is personally exciting to us at Neo given our community's strong CS roots. The Cursor team has been programming since middle school and working on AI for almost as long. Redesigning programming with AI feels like what they were born to do.

There are many goliaths competing for the same prize, and Cursor has surprising advantages over other players. We're betting on their three-pronged approach of growing virally down-market, owning the entire editor, and building a tool that’s already useful today – it’s reminiscent of Figma's winning strategy. A year after our investment, they raised an $8M war chest from OpenAI, securing them special model access. Remarkably, Cursor is already becoming one of the most-used code editors in the world, a testament to both their team and their strategy.

Cursor is used by tens of thousands of engineers every day, including those at companies like Ramp, OpenAI, and Midjourney. Their rapidly-growing paid subscriber base is already generating over $2M in ARR, despite launching just eight months ago. Notably, this growth is fueled organically by word-of-mouth, a hallmark of some of the most successful companies in tech history. Their recent releases of faster AI-powered edits, linting, terminal commands, and image support have only accelerated growth.

Cursor is a lean team where each engineer gets lots of responsibility for research and product decisions, and even interns get to own projects end-to-end. This often involves very challenging problems in ML, product engineering, performance, and infrastructure. For instance, their upcoming “Copilot++” release has involved training a custom model from proprietary data, clever inference caching, careful networking, and UI experimentation. Cursor’s team takes pride in offering an intense learning environment, with a mantra: “You will learn more here than you will anywhere else for the rest of your life.”

— Ali Partovi and Suzanne Xie, Partners at Neo

Cursor (Anysphere) is hiring talented founding engineers and researchers in San Francisco - if you’re interested, please reach out to their team here. We also recommend checking out the rest of Ali and Suzanne’s portfolio at Neo (i.e. Warp, Pavilion, and Vanta). Both are experienced operators - Suzanne was previously Head of Product for B2B Payments at Stripe and Ali was previously Co-Founder of Code.org and LinkExchange (acquired by Microsoft). If you’re a college student studying CS, we also highly recommend checking out Neo Scholars.

Why You Should Join Orby AI

Revolutionize Your Workflow with Tailored Automation

In today's fast-paced business environment, organizations are bogged down with an overwhelming amount of manual document processing, validation, and organization tasks. This reality spans across various roles – from HR associates managing employee onboarding to sales professionals tracking leads, and finance managers handling invoices. These repetitive tasks, although seemingly brief, cumulatively consume a significant portion of an employee's time over months or even years.

While existing Robotic Process Automation (RPA) tools like UiPath and Automation Everywhere have made strides in addressing these challenges, they often stumble when facing complex, dynamic workflows. They typically require extensive initial setup and programming, limiting their scope to tasks defined by strict rules – representing merely 20% of potential automation opportunities.

Orby AI emerges as a groundbreaking solution, going beyond the limitations of traditional RPA. By integrating advanced AI with observational learning, Orby AI streamlines the automation process, removing the need for tedious programming. Upon installation, Orby AI intelligently observes and learns from a user's interactions, understanding the intricacies of their job and the applications they use. This "observe, learn, and automate" approach rapidly identifies inefficiencies, offers optimization suggestions, and, over time, independently executes repetitive tasks, continually refining its performance based on user feedback.

What sets Orby AI apart is its ability to adapt to each user's unique workflow. Every Orby AI instance is trained to mirror the specific work habits of its user, evolving into a personalized assistant. This not only frees up time for more creative and high-impact tasks but also proposes innovative workflow enhancements. At its core, Orby AI is the first to offer a complete end-to-end automation platform, developed by training its own models on observed user workflows.

The vision for Orby AI was conceived by co-founders Bella Liu and Will Lu in 2022. Bella, with her experience in product leadership at UiPath, and Will, with his background in AI innovation at Google, recognized the limitations of current RPA tools. Their collaborative effort has resulted in Orby AI – a comprehensive solution designed to tackle the entirety of repetitive tasks, far beyond the conventional 20%, positioning it as an indispensable partner in workflow automation.

— Arash Afrakhteh, Partner at Pear

Orby AI is hiring talented software engineers, research scientists, and account executives - if you’re interested, please reach out to their team here. We also recommend checking out the rest of Arash’s portfolio at Pear. Arash is highly technical and was previously Co-Founder and CTO of Cariden Technologies, a bootstrapped infra startup acquired by Cisco.

Conclusion

If you’re open to new roles or curious about their products, Chkk, Footprint, Ollama, Cursor, and Orby AI are all promising companies and worth checking out.

Happy New Year, and until next month!

Thanks to Bogomil, Shardul, Alex, Vivek, Ali, Suzanne, Arash, Pejman, and Jill for their help with this piece.

In case you missed our previous releases, check them out here:

And to make sure you don’t miss any future ones, be sure to subscribe here:

Finally, if you’re a founder, employee, or investor with a company you think we should cover please reach out to us at ericzhou27@gmail.com and uhanif@stanford.edu - we’d love to hear about it :)